A recent *study showed that 75% of organizations use a combination of manual and automated testing.

This was a big difference from 2010 when I started my blog, which is dedicated to helping folks move toward more automation for their testing efforts.

The number back then was probably around 5–10%.

Even though the number of companies investing in automation has grown substantially, I still see a large gap.

It seems that most of the engineers I speak with are still focusing solely on automated functional tests.

It’s a great start, but many still miss a big opportunity

End-to-End Automation in DevOps

I believe that if a process can be automated in your software development lifecycle, then it should be.

Many miss out on opportunities to automate in the development, build, and production phases of their pipelines.

That’s why I believe more automation engineers need to start pushing toward end-to-end testing, which includes not only functional automation but also automation for things like:

· Performance testing

· Accessibility testing

· Production reporting

· And the customer experience

And on this last point, I see opportunities for teams to focus on the user.

After all, we’re testing because we want to create better experiences for those who use our app.

Testers are in the best position to lead this; they spend more time than anyone exploring their app.

I’m seeing more automation tooling vendors adding functionality around these areas to expand automation coverage across functional and what was generally considered not automatable “non-functional” tests.

Looking at mabl

For instance, let’s look at mabl.

When I first spoke to the folks at this firm, they were still in beta mode. Their first focus was on functional UI automation testing.

Over the years, however, I’ve seen them evolve into more of a unified platform for all things software testing.

When I spoke to mabl’s founder Dan Belcher recently, he told me that when they started the company, they only focused on browser testing. Over time, however, they heard from many people that traditional automation testing was hard — especially for folks who didn’t have a programming background.

So, they created a low-code approach to end-to-end test automation.

Low Code Test Automation

You might ask, “How realistic are low-code solutions, anyway?”

When I speak with other automation specialists like Paul Grossman, they say it’s viable. When done right, low code is handy because it optimizes your time.

For example, when working with a low-code tool, you don’t necessarily need to learn an arcane, complex language like Java, JavaScript, Python, or Ruby. Nor do you need to spend time figuring out how to implement your test. You can generally navigate your app like a user and record those steps as a test.

If you want to click on an Add to Cart button, you simply click the Add to Cart button, and that action is recorded as a step in your test.

In theory, this allows folks who might be slightly turned off by programming to contribute to the test automation efforts. It can unlock many other team members who understand the users well enough to create, run and manage efficient, realistic, and reliable test automation scenarios.

For those with coding experience, low-code solutions still allow for a level of control through JavaScript when these solutions don’t support something out of the box.

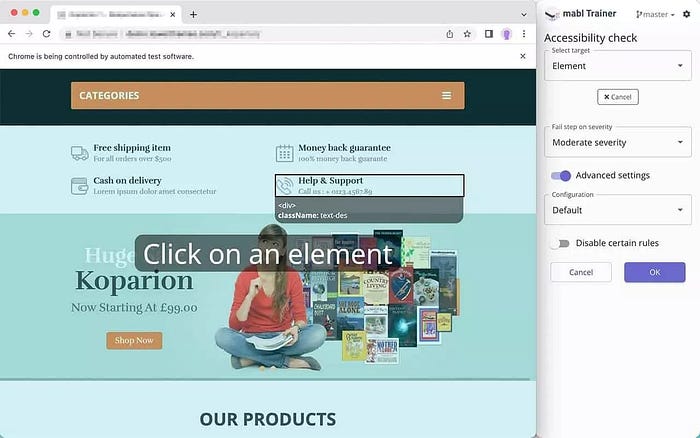

Here’s an example of low-code test creation in mabl. You can record tests through the mabl Trainer and add assertions without needing to write code.

So using a low-code test automation solution like mabl has the added benefit of better test coverage at the user experience level — not only for functional tests but also for non-functional tests.

Another way to grow coverage has to do with machine learning.

Machine Learning

What’s so powerful about using a solution like mabl is that they run primarily on Google Cloud, allowing them to observe a lot of information as tests execute and process them quickly and efficiently.

Running like this allows mabl to handle all the test orchestration complexity. If you’re writing scripts and managing a framework on-prem, managing the infrastructure and analyzing traces, metrics, visual information, logs, accessibility, and test output is a big challenge.

It’s a pain most testers have to deal with if you’re rolling your own stuff.

But if you’re running in the Cloud, there are lots of good services those Cloud providers give you to help open up more testing data.

Having all this data also leads to more reliable tests.

A great example of that is auto-healing.

Automation Testing Auto Healing Feature

Mabl’s auto-healing has built-in heuristics with different weights for different attributes, facilitating the backtesting of any changes to those heuristics to determine whether specific changes will lead to more reliable tests.

The team at mabl also told me they work with their customers directly to fine-tune their ML engine based on their architecture.

FYI — according to another recent study (buildkite), 59% of developers deal with daily, weekly, or monthly brittle tests.

Think about how many times fewer brittle tests would save you and your team.

Another example of intelligence helping testers is around waiting for elements to display. Usually, cloud tests execute pretty quickly. Sometimes so much faster that the tests run faster than your application has time to load. To avoid issues, testers typically add fixed waits that can add up over time.

Mabl learns the behavior of your system and waits only for the time necessary — executing the tests exactly when the elements are ready. For example, instead of waiting for 5 seconds by default, it may wait only half a second because it knows that historically that’s the longest it took for the element to render. This speeds up execution and helps to reduce false negatives.

Machine learning to replace engineers?

A major misconception about machine learning (ML) is that it will replace software engineers, but it’s not about replacement; it’s more about augmenting.

Using insight produced by machine learning requires a team to decide how to move forward.

Let’s be honest: I’ve been involved with automated testing for almost 25 years, and sometimes it’s still a struggle.

Even more nowadays, when you throw your whole team into the mix.

I’ve seen team after team trying to keep pace with functional quality as the pace of development velocity has increased. And there’s always the desire to extend testing for non-functional quality, but never enough time.

So, suppose you leverage more advanced AI machine learning techniques over time. In that case, it should yield you some core automation coverage — enough to allow you to focus on higher-value activities like:

· UX

· Strategy

· Collaboration

· Performance

· Ensuring your software is accessible

You want to allow testers to spend time on these things instead of the reactive stuff on which many teams have been stuck.

How should teams approach these new areas, such as accessibility and performance?

Non-Functional Test Coverage

Mabl has gradually added other features to help make it easier for someone to create end-to-end tests.

For instance, over the past few years, they’ve added performance insights and accessibility checks to be built into those low code, end-to-end tests. This helps teams get a bigger bang for their buck, just reusing the functional tests they already run.

Dan mentioned that numerous mabl customers reported that after building out all their tests in mabl, they had to recreate them in a different tool to cover things like accessibility testing.

Baking this feedback into mabl with those requested features allows users to leverage their existing functional tests and perform other critical, non-functional checks.

Without creating a new test in mabl, add an accessibility check for common accessibility issues, such as color contrast.

Understanding all of the accessibility issues across your application, categorized by failure reasons, and trend that data over time.

As I mentioned earlier, this is a trend I’ve been seeing other vendors follow as well.

Another trend I’ve seen popping up is how all these components come together into a quality engineering concept.

Quality Engineering Mindset

Three concepts make up quality engineering.

1. Quality Engineering Focused Team

For one, a quality-engineering-focused team is building quality from the minute a developer begins working on a branch locally through to production. In contrast, the traditional QA team sometimes receives a deliverable from the development team ready to be tested.

Mabl offers functionality to aid the development build stage, like offering a CLI, which we’ll cover later in this article.

2. Coverage

The second reason is that a quality-engineering-focused team considers coverage much more ambitiously.

They want to have enough automated test coverage that they can truly trust the test coverage. The difference between feeling like you have excellent test coverage is between being able to deploy automatically versus needing to manually verify before you feel that level of comfort.

Once again, mabl has advanced test coverage reporting that can analyze production and notify the team of any potential gaps in their coverage. This is done by leveraging insights produced by machine learning of all the data produced in CI/CD.